Always complaining because your Wi-Fi does not work well? Here are the reasons!

In the past decades wireless communication has become so fundamental to our modern society and its development, that it now plays a significant role in our everyday life and it is an integral part of most of our online activities. It is fascinating to think that nowadays we are able to communicate with people on the other side of the world within seconds, to search online for answers to our questions so easily at any moment, to get entertainment on the television while just sitting on our sofa, to know where a friend lives by just typing an address on an app… It is immediate to notice that our scientific and technological progress is continuously evolving extremely fast and it will probably continue doing so for long. We might not be fully aware of it, but we all use wireless communication everyday in many familiar situations, such as when we connect our laptop to the local Wi-Fi network, when we use navigation apps to orientate ourselves while driving, or when we send a message to a friend using our smartphones. It has become so natural for the world we live in, that we often take it for granted and have no idea of how it works.

Image by NASA/NOAA, editing by Matteo Blandford.

Wireless signals

Wireless communication consists of transmission of data packets or information through wireless signals, which are electromagnetic waves travelling through space. These waves can travel some distance depending on the strength of their energy. The information is transmitted over a few meters to hundreds of kilometers through specific channels. We distinguish between different types of wireless communication technologies, according to the distance of communication and the devices that are used for it. We are all familiar with many of these types: Wi-Fi, for instance, and Bluetooth, radio and television broadcasting, cellular communication and satellite communication such as GPS. These are only some of the most common technologies, but what makes them different? The answer is mainly given by two features: frequency and modulation.

Since nearly every technology uses a different frequency and modulation type, most devices can only understand a very specific type of wireless signal. The frequency is the rate at which a signal vibrates: if the signal vibrates very slowly, it has a low frequency, while if it vibrates very quickly, it has a high frequency. Frequency is measured in Hertz, which indicates of how quickly a signal changes every second. To give an idea, FM radio signals vibrate around 100 million times every second! Signals can be different also in the way they are modulated, i.e., changed, in order to send information. Different technologies use very different types of modulation, which are often not compatible with each other. For example, satellite devices cannot communicate directly with your laptop.

A device sending out a wireless signal is called transmitter, while a device picking up that signal and understanding the information is called receiver. In the case of radio signals, there is one transmitter, the radio station, and many receivers that people listen to the station with. Devices such as routers can both transmit and receive, which is what makes them useful for building networks: we want to be able to send messages as well as receive them.

Interference and collisions

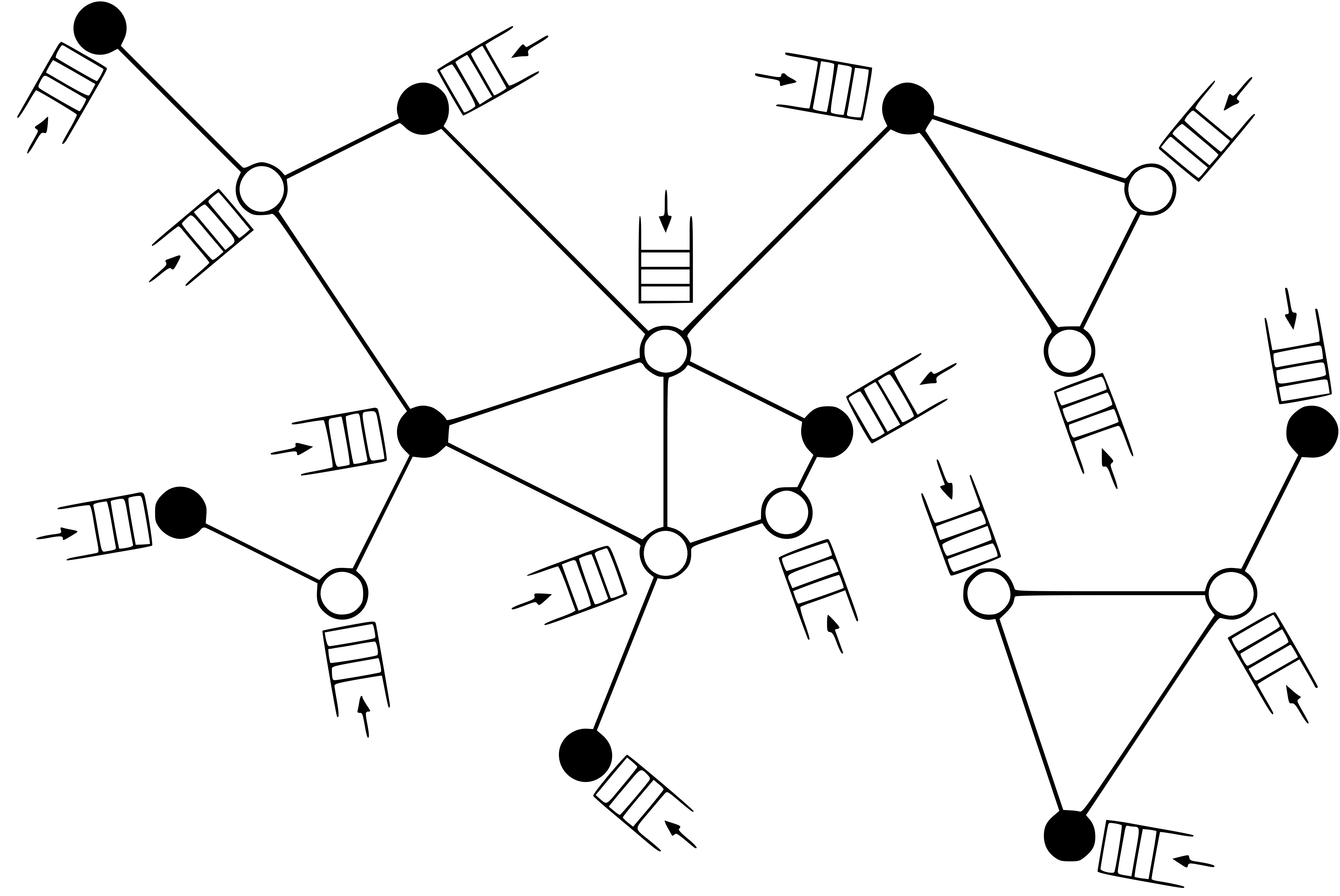

Let’s say everyone in your apartment building has their own router. It may be the cacophony of all the router’s signals that makes your Wi-Fi connection unstable or weak. Your router transmits a wireless signal in all directions. All your neighbours´ routers do the same. If all of them use the same channel, the signals collide. Since wireless signals typically propagate in all directions, if there are many ongoing nearby transmission on the same channel, they interfere with each other and information may not be processed correctly. This may result in poor signal strength, causing irregular and weak communication. When signals interfere with each other, we say that a collision occurs.

In order to avoid such collisions, the majority of wireless communication protocols ensures that nearby devices are typically prevented from simultaneous transmission. Devices alternate between very short periods of transmission and very short periods of rest, called back-off periods. After each back-off period, they are allowed to transmit again only if there are no ongoing transmissions from nearby devices. Note that in our daily experience we do not sense this alternating behaviour at all. Indeed, we are talking about very short periods, typically of the order of a millionth of a second!

Wireless networks often exhibit spatial unfairness phenomena depending on the actual location of the devices. Indeed, even if the back-off periods are very short, individual devices may experience prolonged periods of starvation, during which they do not transmit. This results in severe build-up of queues, since data packets that arrive at the devices must wait to be transmitted, and long delays in transmissions. In other words, data packets arriving at the various devices form queues and must wait before being transmitted. Long queues may imply significant delays and poor network performance, which for Wi-Fi networks, for example, we often witness under the form of slow connection.

A random-access network. Each node represents a communication link between a transmitter and a receiver (nodes that are transmitting are in black, while nodes that are not transmitting are in white). The connections between nodes represent the possible interferences between nearby devices: the wireless signals interfere with each other when within a certain radius. Data packets arrive to each transmitter and form a queue.

As we said, in order to avoid collisions from occurring, the network requires a protocol that regulates the transmissions between devices. Which are such protocols? How do they work? Can they be improved in order to counter the starvation effects and to make the network more efficient?

Random-access protocols

Various types of protocols have been analyzed and used, aiming to detect collisions when they occur or to avoid them before they occur. The two main classes of collision avoidance protocols are the ones of centralized protocols and distributed protocols. In centralized protocols all the devices connect to a central server, which oversees the whole network and monitors all the interferences in it. It coordinates all the transmissions by telling the devices when to transmit and when to wait. Implementing centralized protocols is often challenging and requires a huge amount of information to be processed. Indeed, the devices must somehow continuously update the global control entity in order for it to be able to take decisions. Distributed protocols, instead, consist in the devices deciding autonomously when to start a transmission only using local information. Most of these protocols involve randomness to avoid simultaneous transmissions and share the channel in the most efficient way. For this reason they are also called called random-access protocols. Thanks to their low implementation complexity (they do not have to process huge amounts of information) they have become very popular in wireless networks and they are often preferred to centralized protocols.

The main idea behind random-access protocols is to associate with each device a random clock, i.e., a clock that ticks at random times (a random timer) independently of all the other devices. The ticks of the clock determine when the device attempts to access the channel in order to transmit. Despite the fact that these protocols can be described in a simple way and only require local information, the global behaviour of large networks tends to be very complex: it critically depends on the structure of the network and on the distances between devices. Indeed, as we said before, nearby devices must be prevented from simultaneous transmission in order to avoid them interfering and disturb each other’s signals.

One of the first random-access protocols implemented in wireless networks was developed in the 1970’s and is called ALOHA. It requires that every device remains inactive for a random amount of time after every attempt of transmission, so that devices do not start transmitting at the same time. This back-off mechanism was developed to avoid simultaneous activity of nearby devices and to reduce the chances of collisions. Indeed, it is very unlikely that two devices attempt to transmit at the same moment after waiting a random amount of time. The Carrier-Sense Multiple-Access (CSMA) algorithm is a collision avoidance protocol that refines the ALOHA protocol by combining the random back-off mechanism with interference sensing. It is a carrier-sense (CS) protocol, since the devices can only transmit if they sense the channel is idle. Each time they attempt to transmit, if they sense activity of interfering devices, then they freeze their clock until the channel is sensed idle again. It is a multiple-access (MA) protocol, since several devices can transmit by accessing the same channel alternately. The CSMA protocol is collision avoidance, since it tries to ensure that devices do not start a transmission at the same time in order to prevent collisions. CSMA algorithms are popular in distributed random-access networks and various versions are currently implemented in (IEEE 802.11) Wi-Fi networks.

Clock rates

In most of the versions of the protocols, the clock ticks depend on pre-determined fixed rates. In other works, the clock rates are chosen a priori and the clock ticks are still random but not affected by what happens in the network. These protocols are easier to implement and to analyze when studying the network performance. In some other versions, the clock ticks depend on rates that changes in time. The clock rates are not fixed anymore, allowing more flexibility for the protocols, but they still do not depend on the behaviour of the network.

How can we design more efficient protocols that take into account the actual behaviour of the network and improve the transmission between devices?

In the past years, a new class of protocols has been proposed where the clock rates depend on the current need of the devices to transmit. In other words, the clock rates depend on the current queue lengths at the various devices. In these protocols the current state of the network determines the transmission attempts and in some sense devices with big queue lengths become prioritized. Intuitively, they should be more willing to transmit than devices with small queue lengths, since we want to avoid long queues and delays in transmissions. These protocols are also called queue-based protocols and are nowadays one of the main focus of investigation in wireless networks. The fact that the behavior of the network depends on the queue lengths at the devices makes them challenging to analyze. However, various types of queue-based protocols have been studied and their implementation will soon improve significantly the world of wireless communication.

The animation (developed by Thom Castermans from the TU Eindhoven) shows the behaviour of a wireless network when implementing two different random-access protocols. On the left the network follows a protocol with fixed activation rates, while on the right it follows a queue-based protocol. The histograms help to keep track of the size of the queues in the network.

Conclusion

In conclusion, when you experience connection problems on your smartphone and suddenly have no service or when your home Wi-Fi is too slow and you cannot watch a movie on your laptop, think about this article before blaming the various network operators or internet providers. As we saw, wireless networks technologies rely on complex and challenging protocols in order to ensure an overall efficiency of the network. Sometimes you might have the right reason to blame them, but most of the times the issues arise from scientific and technological challenges that go far beyond that.