This is a question I have been thinking about for the last two years. In this article, I will give a little overview of what I have discovered in that time.

Algorithmic collusion can mean quite a variety of things. It could, for example, refer to a situation in which the learning algorithms gain control of humanoid robots, start bearing arms and work together to exterminate all humans.

No reason to panic, the setting I have been focusing on is much more mundane. Specifically, I have been studying how algorithms that determine prices for companies selling their products on platforms such as Amazon and bol.com can learn to charge prices that are higher than would be competitive. Companies are prohibited by law to contact each other and arrange the prices they will charge. Each company has to decide on the price they will charge based on their observations of the market. But when algorithms are used it is much more difficult to control this. The problem with such algorithms is that they could be legal. In many cases, such algorithms are actually legal, even though they disfavour clients.

As long as the algorithms don’t explicitly communicate to raise the prices, they are perfectly legal! More broadly speaking, algorithmic collusion is a type of algorithmic cooperation. In most cases, cooperation is seen as a desirable property of algorithms, and much research focuses on making algorithms more cooperative. For example, self-driving cars must learn to cooperate to avoid collisions. The flip side of this is that the algorithms designed with cooperation in mind may also be employed in settings, such as pricing, where cooperation is detrimental to humans. So how could we use research to prevent algorithmic collusion without hindering algorithmic cooperation?

Explaining Artificial Intelligence

The approach I have been exploring follows the idea of explainability in AI. The idea is the following: if we can identify the mechanisms that lead to collusion, which is what we mean with explainability, then policymakers can prohibit the use of these mechanisms in settings in which collusion would be harmful. Up until now, I have done this for two algorithms that are quite different. The first makes use of simple gradient ascent techniques and the second is a reinforcement learning algorithm. What is required in each of these algorithms for collusion to occur is fundamentally different. Don’t worry if you are not familiar with these terms, we will explain how they work later in this article.

Before we dive into these algorithms I’ll explain what collusion is more specifically in a pricing duopoly. A duopoly means a market in which two firms are competing. They both sell the same product and at each (discrete) point in time get to decide the price they charge for the product. They then sell some quantity of the product and based on this may adjust the price they charge.

If the firms are competing properly, they will want to charge a price that maximizes their own revenue. The price pair that does this for both firms is the competitive (or Nash) price (we assume that there is only one such price pair for simplicity). When this price pair is charged none of the companies wants to deviate from it. Alternatively, the two firms could behave as if they were a single firm and maximize their joint revenue. This will typically result in a higher price and if the firms are similar also in higher revenue for both firms. There are however cases in which behaving like a monopolist is not profitable for both firms. Hence, if these companies use algorithms to determine the prices they will charge, then the algorithm needs to distinguish between the cases when it is and when it isn’t profitable to collude (maximize the joint revenue).

In addition, the algorithm should not be exploitable. This means that it should learn to price optimally (so collusively when this is profitable for both firms and competitively otherwise) when it learns against itself and price competitively when it learns against a competitive algorithm. If this is not the case, a competitor could use a competitive algorithm and would profit from the fact the collusive algorithm is trying to collude. The competitor would then learn a best-response to the colluding algorithm, which means that it earns much larger profits than the collusive algorithm.

Deliberate collusion

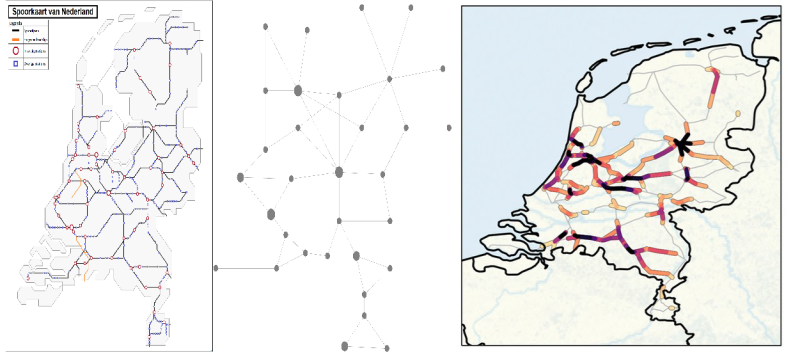

For the first algorithm, I worked together with Arnoud den Boer to design an algorithm that learns to collude in a pricing duopoly to demonstrate how this could be done. We basically use the same algorithm twice in separate sequential phases, one phase to learn the competitive price and one phase to learn the collusive price. Then we have a mechanism by which the algorithm decides which of the two versions performs better (as measured by the profits they lead to). The version that performs better is then selected to be run for an extra phase. By repeating this process and gradually lengthening the last phase (where the better version is used) in relation to the rest, the algorithm eventually learns the optimal price when it plays against itself.

So what mechanism is leading to collusion here? The algorithm is crucially split into components that have a different task and then decides which task is more profitable. For both tasks (learning the competitive and learning the collusive price) to be learned the algorithms of both players must have access to the revenue earned by the competitor. At first, this may seem like quite a hindrance to collusion, but it turns out that many online shops actually make their revenue for a specific product known implicitly by sharing how many items have been sold in a specific time or by sharing how many items they have left in their inventory.

How could this be detected or regulated? Well, the phasic structure of the algorithm means that the price sequences produced by two companies using such an algorithm will have many synchronized price jumps. By detecting these, regulation authorities could get an indication of which firms might be using such algorithms. Based on this they could gain access to the code used by the firms and from the code it becomes clear that the algorithm was written with the intent to collude. If this algorithmic intent is made illegal, such collusion could easily be regulated.

Spontaneous collusion

The second algorithm I have been studying uses the famous Q-learning algorithm. This is a reinforcement learning algorithm, which means that it takes actions based on some picture of the environment that it has, it observes what happens when it takes that action and uses that feedback to change the picture of the environment that it bases its decisions on. DeepMind and AlphaGo are examples of such an algorithm. In our case, the algorithm decides what price to charge for a product and then observes the demand (or profit) it receives by charging that price. The picture of the environment it has in our case can be thought of as keeping track of how valuable it is to use each price. Based on this the algorithm could always choose the action it considers to be most valuable at each point in time.

What makes the Q-learning algorithm different is that it has a memory. Using this, it can base its decisions about prices on what the competing algorithm did in the previous period. The result is that it can learn strategies such as tit-for-tat (always playing the action the opponent took in the previous period), which are known to be useful for colluding.

Studying the dynamics of two Q-learning algorithms is very difficult. This is because the environment from the perspective of one of the algorithms changes as the other algorithm learns (the environment is non-stationary). We say that the competing algorithm gives rise to noise in the environment. The problem can be simplified if we assume that the algorithms have perfect information. Typically, the updates to the picture of the environment (Q-values) are made based on a single reward realization. But you could also let the algorithm run unchanged for several periods K (the batch size) and then update the Q-values based on all the samples (information) you collected. If you let K grow large (so that your update is based on more and more information), you can consider the update to be based on a perfect picture of the environment it is in. Calculating what the updates would be in this case is possible and gives us deterministic dynamics.

Surprisingly the deterministic dynamics tell us that collusive outcomes should be very unlikely. As a result, the collusion we observe must be due to the noise that arises from the exploration of the Q-learners. In fact, if we simulate the trajectories of the Q-learners we see exactly how the noise leads to collusion. This happens through something like a phase transition (like water becoming gas when boiling). The details of how this occurs are fairly complicated (I’m still trying to wrap my head around it) and will be the topic of a future post.

Conclusion

In the first algorithm, collusion is written in the code. The algorithm is clearly trying to learn to collude, which could be used to make it illegal. In the second algorithm, collusion occurs because of the noise that arises due to exploration. This makes it harder to regulate, but it also seems to be a very specific combination of updates and noise that leads to this result, meaning that it might not be possible to implement the algorithm in realistic settings. The second algorithm also has no guarantees for performing well against competitors not using the same algorithm. But, both cases make it clear that it is very important to understand how algorithmic collusion exactly work, and to adapt regulations so that cooperation is endorsed but collusion is prohibited.

The featured image is taken by QuoteInspector.com.